Supernova | Generative AI and Creative Work

I was a guest at a comic convention and everyone wanted me to talk about AI.

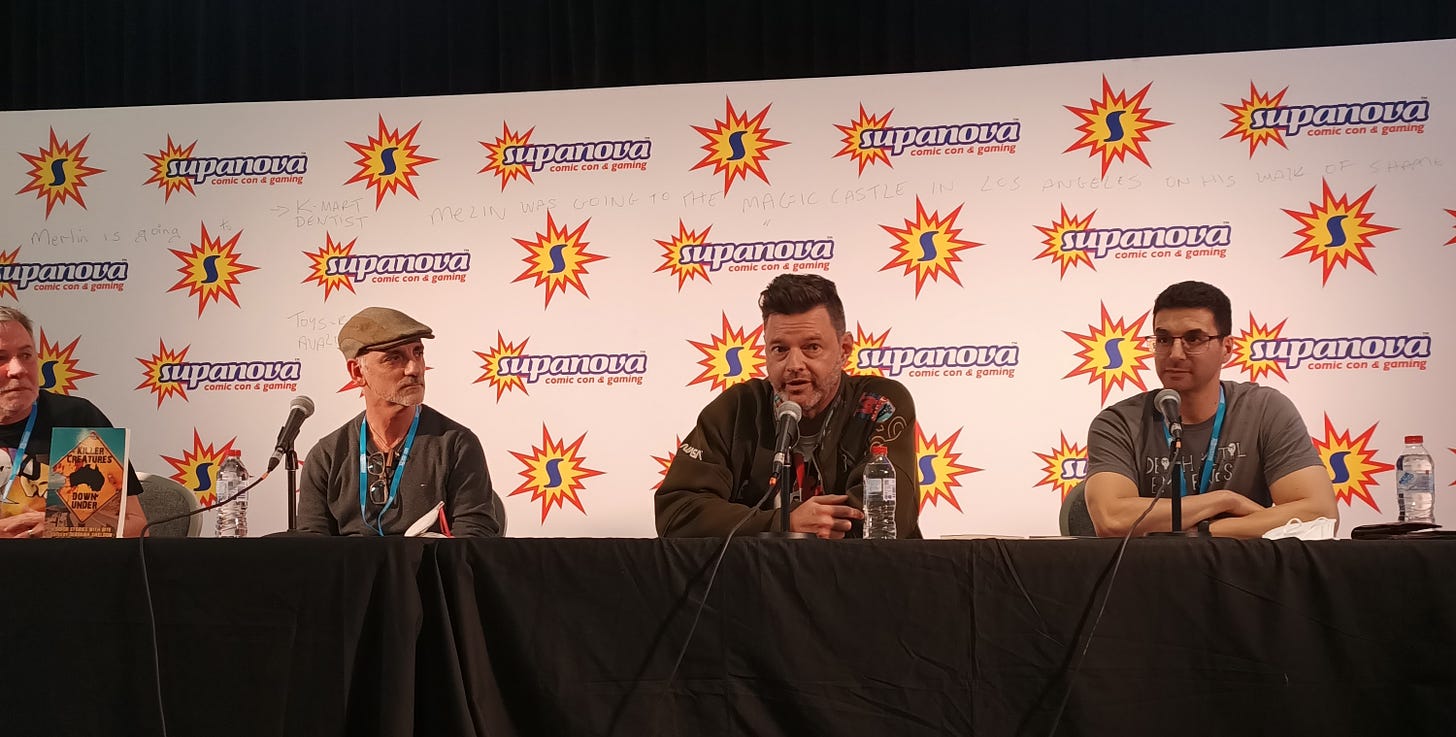

I was a guest at the Supanova Comic Convention here in Melbourne last weekend. For those who are unfamiliar, this means that I spent most of two full days, 10am-6pm, sitting at a table selling books I’ve written, promoting my new comic, chatting with nerds and cosplayers, and talking shop with a bunch of other writers and artists. On the Sunday afternoon I participated in a panel discussing some of the work put out by my Australian publisher, IFWG.

It was a fun, exhausting couple of days, but one thing that stands out to me now is the fact that everyone wanted to talk to me about generative AI—including the host of the Sunday’s panel. I have promised that I will be covering this topic on this Substack, but there’s a lot to talk about it’s changing more quickly than I can write about it. Today I’m to do a very broad introduction to generative AI and the issues it creates for creative workers. In future posts I’ll look at more specific aspects of the technology and how that will affect different creators in different media.

What do I know, anyway?

When I’m not writing stories about arrogant rockstars, sociopathic wizards, and suburban Satanists, I am a data scientist and a software architect. My job is to work out how to leverage AI and machine learning in the records and data trust space. I’ve been doing this successfully for a number of years now.

Last year I published an academic paper comparing Large Language Models (like ChatGPT) to statistical machine learning algorithms for classifying text, and I have a chapter about LLMs in an upcoming non-fiction book about Mary Shelley’s creation, A Vindication of Monsters. So, while I didn’t design any of these AIs, I have a professional understanding of how they work and what they’re good for. Today I want to share some of that understanding with you.

The threat of generative AI

From a prompt of just a few words, generative AIs can write essays, poems, stories. They can produce fully rendered artworks. They can synthesize music and human speech. They can write code. Creative workers who do any of these jobs on a professional basis (i.e. it pays their bills) rightly feel threatened by these advances.

It’s true that every new advance brings fear. I am old enough to remember Harlan Ellison railing against word processors, but I do remember when digital art started to become common amongst comic creators. There was immediate outcry that it wasn’t the same, that work on traditional media was better, than crucial skills were being lost. The same again for when those artists started to use 3D modelling software. Now these tools are both prevalent and uncontroversial.

But generative AI is different. Word processors, 3D renderers, digital painting, and even stock photography are all tools that a creative practitioner can use to help them compose their work. The word ‘compose’, I think, is the key to the current angst. Unlike those older tools, generative AI is able to compose finished (or finished-seeming work), with only minimal guidance from a human—much less a skilled artist.

Like those other tools, generative AI is here now, and it’s not going away. It could be catastrophic for the livelihoods of creative workers, but it presents opportunity, also.

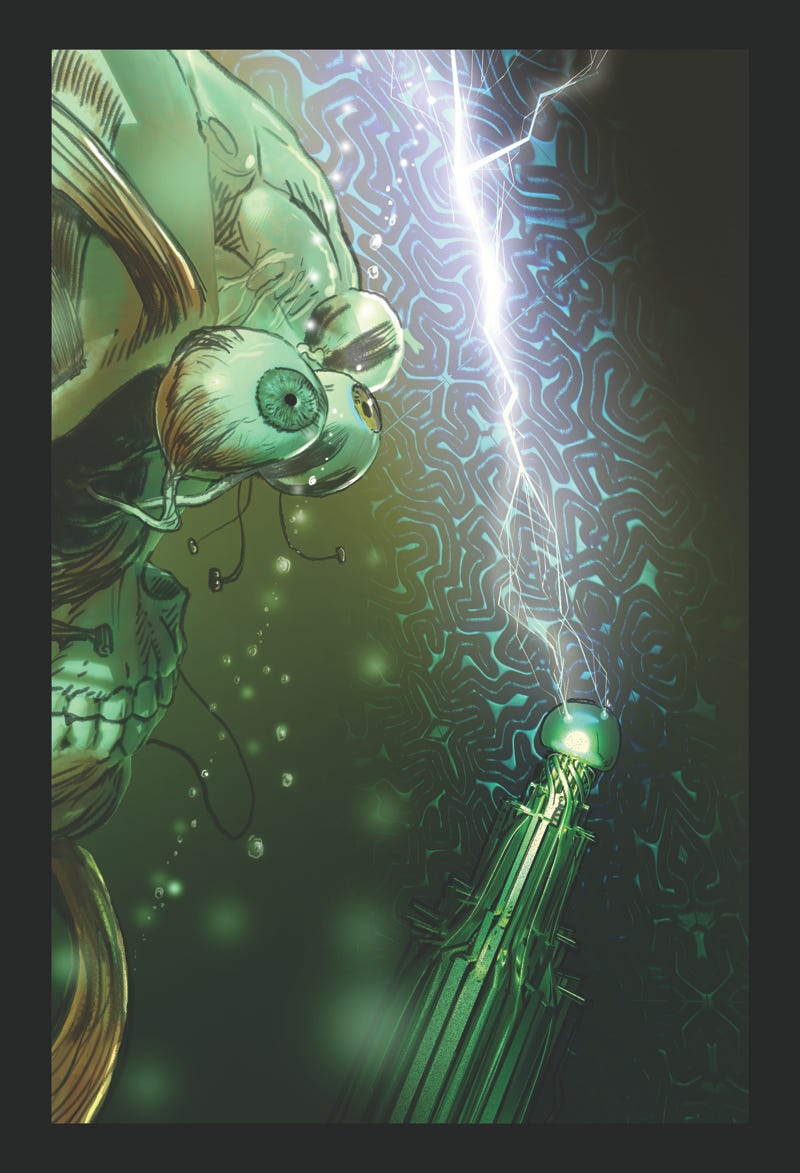

Before we go any further, we need an understanding of how they work and what they do. So please indulge me—this will be a bit technical, but I will spice it up with some unseen panels from Frankenstein Monstrance, too. Cool? Cool.

What exactly are these things?

Generative AIs are neural networks which are trained to generate text or images (or sound) using vast amounts of data. A neural network is a class of machine learning algorithm that is crudely analogous to a human brain, in that it is composed of layers of ‘neurons’ that are highly interconnected. Sometimes neural net AIs are also referred to as ‘deep learning’ models, because the very large and very powerful models we’re seeing today have many many layers.

Like any machine learning algorithm, a neural network must be trained to do things using example data. The more examples we use, the more skilled the network will become.

How do we training them?

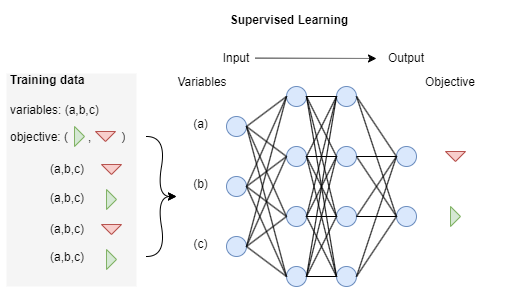

Machine learning algorithms are typically trained in one of two ways: supervised and unsupervised learning. In supervised learning we know the objective (or ‘label’ or ‘target’) for every training example. We are trying to teach it model the objective from the characteristics (variables) of the each example, so it can predict the objective when we present it with examples not seen during training.

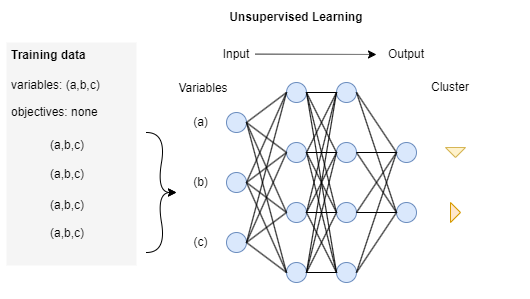

In unsupervised learning we will don’t know the objective up front; we are just trying to get it to find clusters or patterns in the training data.

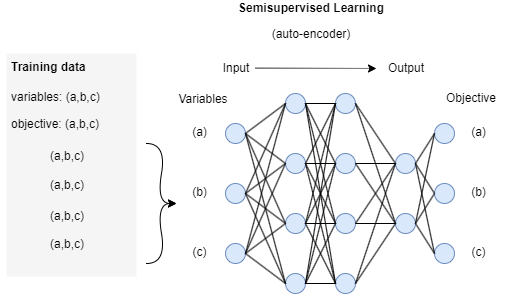

Generative AI uses a hybrid approach called semi-supervised learning.

Most data does not have an objective—it’s just an observation or an example of text, or a picture, or an audio recording. For this reason, we train a neural network in an unsupervised mode to replicate its input variables. This type of neural net, called an auto-encoder, and is the key to generative AI.

Autoencoders build up general knowledge about how language, or image, or music) works, which can then be applied to specific tasks that do have an objective, such as classifying information, predicting outcomes, authoring text, drawing pictures, or writing code. This is called pre-training

.

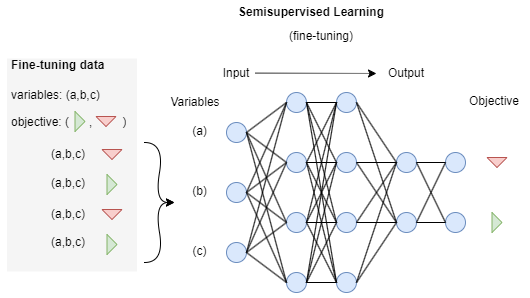

A pre-trained model can be ‘fine-tuned’ to some well-defined objective using supervised learning. This results in the best of both worlds. We have a pre-trained model that has been educated with huge amounts of general data, and now we can use a small amount of data we have that has been annotated with our objective (e.g. draw a picture like Jason Franks) to train it to that specific task, leveraging everything it already knows to improve its skill. This is called transfer learning.

These models are sub-logical. Any knowledge learned by a neural network is encoded in the weights between neurons: there is no explicit reasoning present. There are no rules or facts; the ‘knowledge’ encoded in the model is purely statistical. A network will transform the input data (a text prompt, in most cases) by passing the data through all of the trained layers of the network, which will transform it into the output it thinks is most probably correct without anything resembling a logical sequence of decisions.

There is a divide in the AI community, going back more than half a century, between proponents of statistical and classical (deductive) to problem solving. Neural networks are firmly in the former camp and we truly are seeing their power now—but this is an important distinction to remember, because it also highlights the failings we are seeing in generative AI.

The failings of generative AI

The tech business, in typical fashion, has been hyperbolic about what the technology can do. When pressed to explain its limitations their response has been grudging and unclear. So let’s fix that.

The problem with every AI we have today is that it’s very powerful on narrow tasks, and very stupid about anything outside of those bounds. In the case of generative AI this is less apparent because their output is designed to be convincing, at least on a surface level. Convincing enough that a Google engineer lost his job because he came to believe that one of Google’s LLMs was sentient. These models are trained to give you what they think you want. But they have no self-awareness, no initiative, and no ability to experience the world. They are literally trained to fool you into believing them.

Remember, these models are statistical. They don’t know facts and they cannot make reasoned decisions; they can only give you what they think is the most probable answer. An LLM like ChatGPT is trained to be able to manipulate language, not to serve truth—sounding human is more important than being accurate.

GPT’s Codex model writes what it thinks computer code should look like, and maybe it will work to solve the problem—or maybe it won’t. Code is more orderly and predictable than human language, so it’s pretty good at this. But it’s definitely not reasoning out problems like a human programmer.

Stable Diffusion and Dall-E do not know how many fingers are on a human hand, or teeth are in a human mouth. The fact that these models often get this stuff right is astounding, but in no way guaranteed. They do not know how to count.

Another failing is that machine learning models propagate biases from the training data. LLMs trained with English language text are of course is going to reinforce Anglophone biases, for example. AIs cannot distinguish correlation from causation, so, an ML model used to evaluate resumes might rank male workers higher in male-dominated industries—not based on merit, but because most of the successful candidates it was trained on are male.

While it is possible to install heuristic guard-rails into generative AIs to prevent them from generating hate speech, pornography, disinformation and other harmful material, these are never comprehensive. Already there is a contingent of hackers making a sport of bypassing them.

Ethical problems with generative AI

These models are trained using vast amounts of data, which has been scraped from the public internet. While this data is publicly available, it has been used without permission from its creators.

Worse still, some companies have used artists’ names as part of their sales pitches. Stable Diffusion’s early publicity suggested that users ask the AI to paint like the artist Greg Rutkowski, whose work was scraped without his permission and who has not been paid for the use of his name. Now the vast amount of AI generated artwork created to mimic him is drowning out his own visibility.

At a most generous assessment, these AI developers are profiting from creators’ bodies of work without giving any thought to what this will do to their livelihoods or how they should be compensated.

What does all this mean for creative workers?

While I cannot predict the future, I can make some guesses, and I plan to discuss it more in future posts. But here are some opening thoughts:

Having a magic box that can create any picture or write any story that you ask it to is a very attractive proposition and the general public wants to have this capability. I cannot tell you how satisfied they will be with the end products once the novelty wears off, but people want to be able to do this and will resent any attempt to withdraw the technology. Which is not to say that it’s fair to the creators whose work was plundered to train the model, or to the practitioners losing income.

This is absolutely going to hurt early career creative workers, who will be unable to build careers on lower profile gigs. By this I mean artists’ doing small commissions of people’s D&D characters; copywriters producing articles or marketing copy for websites and blogs; web designers coding personal websites. AI technology is really gaming the Fast/Good/Cheap triangle and it’s going to be very difficult to compete with ‘immediate’ and ‘free’ on the sole basis of quality.

We’re seeing covers from major comics publishers (arguably) generated by AI. Many of these books, especially original, creator-owned work, run on a very tight budget and the cover is an expensive line-item that is an obvious candidate for AI treatment. The money saved on a cover artist may go towards paying other artists for more interior pages. Distasteful as it is, this equation may still be putting more net income into the artists’ pockets.

The adoption of AI generated material is certainly homogenize popular culture further. I believe that we have already seen this in the pop music industry over the last 25 or so years. Listeners are now accustomed to auto-tuned vocals and heavily produced work, where every blue note is tuned and organic variations in rhythm are quantized out.

I think we’re already seeing similar when it comes to book publishing, in both traditional and indie publishing, where respectively similar is more saleable, and quantity is the best way to generate incoming. Generative AI is not going to slow that trend. I’m sure we’ll be seeing it used more and more film-making, games, animation… the whole banana. Every banana.

But I don’t think it’s all bad news. I’ve seen a couple of cases where creators have used AI to produce some genuine art. Loab, by the artist Supercomposite, is art in my considered opinion. Supercomposite discovered the character using image generation, but the art isn’t in the the images, so much as the way she has contextualized and sequenced them into something that is genuinely disturbing. The way that she has composed them into a story. I don’t believe that this is any different to the automatism practiced by the surrealist movement a century ago.

There are opportunities for commercial creators to exploit this technology, not just to assist their practices and handle drudge work— if we can orchestrate favourable legislative and licensing terms. I am fully aware that this is not a business model that most practitioners want (this includes me), but it’s something we need to consider as AI starts to devalue us. We need to establish ways to use AI for our own benefit before the big players cut us out of the equation.

That’s barely the start of what I have to say about AI. Perhaps I’ll write separate posts for different media, for art and writing and music. I might bring in some interviews with creative people who work in different media. Let me know what you’d like to see and I’ll see what I can do.

Don’t worry, there will also be plenty of talk about books and comics. Next week I’ll have a cover reveal for the new US edition of Bloody Waters and a short story work. I may also be able to share some spandex content (I promise it’s not me with my undies on the outside.)

I should mention that you can now communicate with me on Substack Notes, if you feel so inclined. I’m still finding my feet there but it’s looking a whole lot more civilized than, say, Twitter… for now, at least.

Thanks again to Supanova for hosting us last weekend, and to everyone who came along to have a chat. Thanks to Tam Nation for making Monstrance #1 look so damn good!

Franksly yours,

— Jason