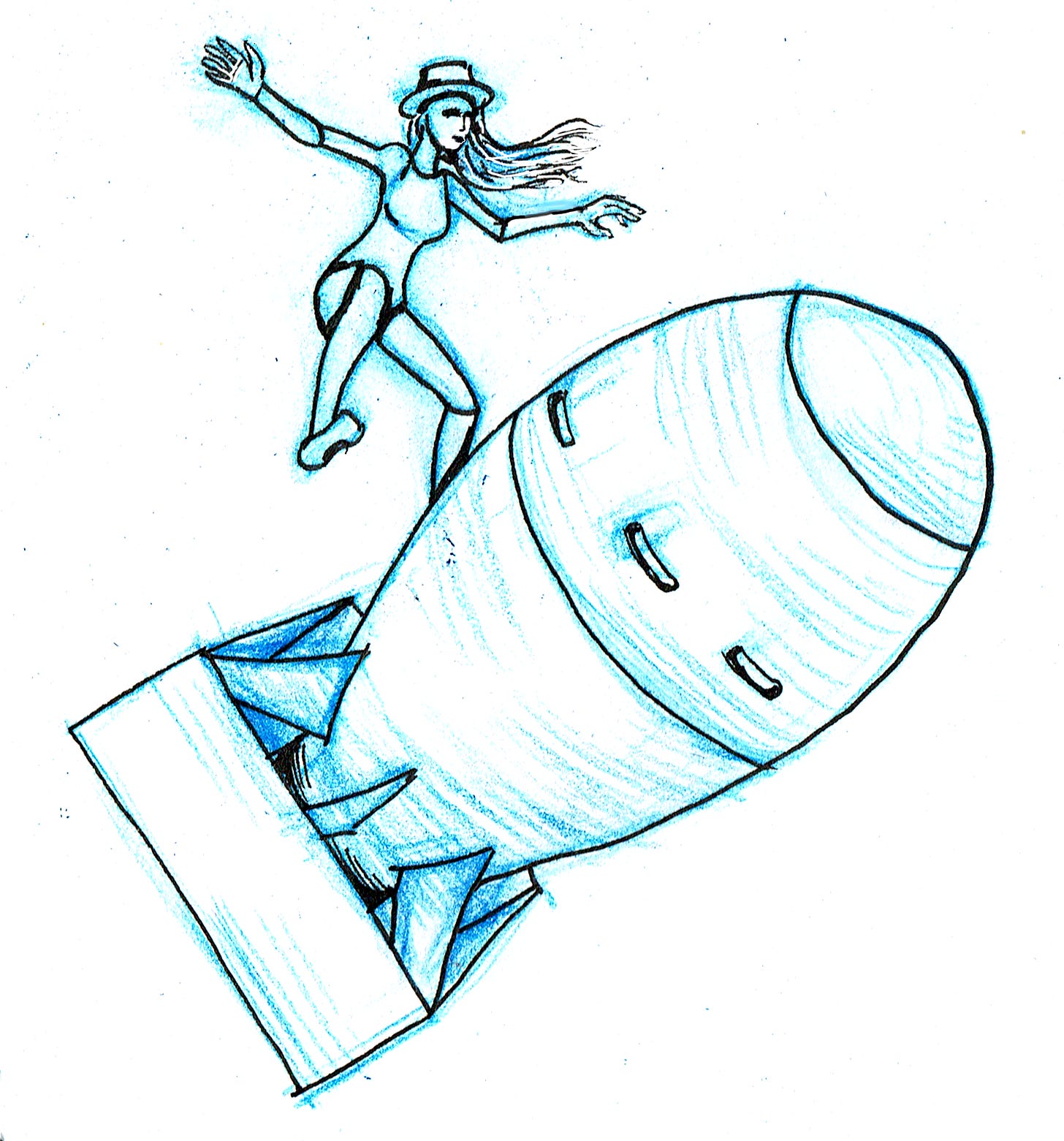

LLMs and the Business of Fiction: AI-Bombs for Barbie (part 4)

Continuing discussion about what AI means for our future as writers--and what we can learn from Barbenheimer.

I’ve been talking about the way that generative AI will affect the business of fiction for a couple of months now and today I want to take a step back now and try to look at the bigger picture. If you’ve been following these posts, we started with what LLMs are and what they can do, followed by a discussion of what trends in the business of fiction tell us about how LLMs are likely to be employed, and then a tactical look at how LLMs might be leveraged in different media. Let’s now adopt a strategic view, projecting from what we’ve through these first months of the mainstreaming of generative AI. I think there are valuable lessons Barbenheimer phenomenon which we should apply to this thinking.

The future might be an awful dystopia or it might not, but it will be assuredly different.

A Dystopian Outlook

Let’s look at some worst case scenarios. I’m as big a fan as anyone of the Terminator, but you’ll forgive me if I fail to indulge an AI apocalypse fantasy. I don’t think we’re headed there, for three reasons:

AI is stupid and I don’t believe we are close to AGI,

it’s too expensive to build enough killer robots, and

traveling backwards in time is bullshit (don’t @ me, I know).

I think any AI dystopia is likely to be political or economic before it’s military.

In the novel 1984, George Orwell imagines a world in which all writing is created by the Department of Fiction in the Ministry of Truth. All plots are state-sanctioned and machine-generated and there’s no room for human creativity there. Julia, a skilled operator of these book machines, has little interest in reading, and no wonder.

Orwell’s dystopia is an authoritarian one, modeled on Stalin’s USSR and Hitler’s Germany, in which the state controls the media its people consume. With the end of the cold war and the collapse of the biggest communist regimes, barring the People’s Republic China, I think we are less likely to fall into one of these scenarios. I’m not even sure you can still call China an Communist country: to my uneducated eyes it has been behaving very much like a capitalist nation, sans democracy. While the regime there is extremely censorious, it is also an enormous financier and consumer of Hollywood movies. Russia, too, has become capitalist. While it is nominally a democracy, with Vladimir Putin at the reins for nearly a quarter of a century, it’s hardly a shining example.

Which is not to say that authoritarian regimes are necessarily communist, of course—just that they are undemocratic. Perhaps we in the West are headed for a new phase of capitalist fascism.

Perhaps that’s where we are headed also. We are seeing generative AI deployed to generate political disinformation an unheard of scale, both by domestic partisans and by foreign states. This is a technique known as the firehouse of falsehood, in which the truth is drowned out by sheer volume of propaganda. This, of course, is a methodology pioneered by the Soviets and which is currently associated with Putin’s Russia—but it’s also a side effect of the increasing garbage AI content. Search engines been hoovering up AI hallucinations and errors we are seeing information sources that we formerly trusted becoming polluted with nonsense and misinformation.

While democracies may be under threat, it looks like capitalism is growing out of control. In our actual, current dystopia, we can, in theory, can consume whatever we want—within the confines of “the market”.

In the publishing world there is a concept called “writing to market”, in which authors try to target their work based on which genres are selling. What page count, what running time, even what specific tropes. There are many proponents for and against this idea. The market is difficult to predict, so authors run the risk of missing the trend, or anticipating the trends wrongly. In Penguin-Random House/Simon & Schuster antitrust proceedings last year, publishers came right out and said, if I may paraphrase, they don’t know what they’re doing, nobody can predict the trends.

But the big corporate retailers and streaming services now have unprecedented data on which to model exactly these trends. With generative AI on board we could see commercial publishing targeting those trends precisely and pushing work out to market faster than ever. They could drown out original work and leave us with an ouroboros of derivative pap, recycling and remixing the same plots and characters forever.

Howard Chaykin’s seminal political satire/SF comic series American Flagg! is about an actor, Reuben Flagg, who plays a space policeman (a ‘Plexus Ranger’) on a popular TV show—until he is replaced by an AI version of himself, and finds himself drafted into the actual Plexus Rangers. Originally published in 1983, we have already seen some of the ideas in this work come to pass. (Go read Howard’s substack. He knows more about comics publishing and Hollywood than I ever will).

It seems clear that the owners of most of those franchise IP are keen to leverage generative AI to scale up production of their material. I think this will still require human direction—a writer’s job for these companies might be to coach the LLM into following a plot and dialogue and editing it into some form of coherence—but likely at a reduced salary and with no slice of ownership or residuals. This flood of new content would enable a data-driven approach to see what material will play best and might even be used to tweak or tune finished media to maximize audience delight.

In the tech business, ‘delight’ now indicates showy but not necessarily useful features. In other media, creators who focus on producing moments of delight often do so at the expense of story coherence. If someone sells you a delight, do not expect it to be satisfying for long.

This cycle of life imitating art imitating life feels to me closer to where we are headed. American Flagg! is set in the 2030s. All we are currently lacking is the talking cats and the off-world colonies.

A-bombs for Barbie

I don’t think this model can succeed for long. Readers crave novelty and I think we are seeing that, both in the indie books boom and in the amazing array of new and diverse talent we’re now finally seeing. That can’t happen if everything is data-driven. If we do see this kind of future, my hope is that it won’t take long before it loses its attraction, the trends flatten, and the Wall Street grifters and they move on to the next Ponzi scheme.

I think we have seen this born out in the last few weeks at the box office, with the Barbenheimer phenomenon giving us two of the highest grossing films of the year so far, Barbie and Oppenheimer.

Both films are powered almost entirely by auteur directors. Oppenheimer is a serious biopic about the architect of the atomic bomb, Robert Oppenheimer, directed by very serious director Christopher Nolan. Barbie is a quirky and artistic magical realist take on the toy franchise, directed by quirky auteur Greta Gerwig. Nolan, who is famous for making big budget, challenging genre material, established himself as a big budget director by directing three Batman films. Gerwig, like Nolan, began her career as a director making smart, smaller budget films, which quickly attracted Oscar nominations.

It can hardly have escaped the notice of the studios that the success of these films is almost certainly down to talent. The Batman property was ailing after a series of flops and had been off the big screen for a number of years when Nolan took the helm and turned it around. Barbie is a new property on the big screen (though not the small), but the source material is famously shallow (as opposed to Batman’s pre Tim Burton fame for being camp), and Gerwig’s subversive feminist take has obviously struck a chord. I don’t think studios can expecting similar results from AI generated media. They just want to cash in on the success with a firehose of AI-generated spinoffs to maximize their return on investment in people, and to drown out the competition.

This of course assumes that they can surmount the legal barriers. AI generated work can’t be copyrighted—so what happens when you are using AI to generate work based on trademarked IP? I don’t know but I feel its a safe bet that the franchise owners have enough lawyer power to find their way through it.

Barbenheimer shows that there is a consumer appetite for new ideas and fresh properties. In the case of Barbie, it turns out that there is indeed a market for works created by women, about women, and for women. (Who knew? Women are only 51% of the population.) There is always going to be a demand for work authored by humans and perhaps working in franchised IP can help creators to build a platform from which they can launch original projects, as Nolan has done and I expect Gerwig will do as well. No IP is going to hold sway forever. Sooner or later audiences want something new—the only question in my mind is what we can do to shorten the cycle.

This trend is going to make it even harder for writers to break ground with new ideas, at least in the short term, and particularly in higher-cost media like film and TV. Perhaps some economic contraction will make smaller budget fare more appetizing to risk averse studios.

I still think novelists and comics creators are less threatened by AI. Publishing is small potatoes next to the screen arts and the gaming industry, but it does serve as a feeder for adaptation and I hope that will drive increased investment in human writers. The payoff isn’t in book sales, but in the bonanza of adaptation. It’s sad to think that this motivation might come to drive the venerable publishing business, but at this juncture any kind of investment that goes to human creators will be an improvement.

Undervalued though we are, we are the originators of new ideas. I don’t think storytelling of any value can exist without us writers and artists. It is our job to spin truth from lies, story from information. In a world in which the truth is unclear and any source of information is increasingly questionable, we have our work cut out for us.

We’re straying a bit from the topic of LLMs, now. Next post in this series will look at some next steps that I think we, the writing community, should be considering. Generative AI is going to be a pervasive presence in our future, whether we like it or not, and the worst thing we can do is sit helplessly by and let it happen to us.

In other news, my 2022 novel X-Dimensional Assassin Zai Through the Unfolded Earth has made the preliminary ballot for the Ditmar awards—Australia’s equivalent of the Hugo Awards. It is an absolute honour to see my book up there alongside books by Alan Baxter, TR Napper, Robert Hood, and Trent Jamieson.

Also, a reminder that my short novel Shadowmancy will be out in the US on September 5th, and is now available for preorder. It is also available on Amazon and Barnes and Noble. Australian readers can of course find copies here.

Finally, I will be helping David Blumenstein to launch his excellent non fiction comic about AI art, Octane Render, on September 6th at the Sticky Institute here in Melbourne. Stay tuned for details.

As always, I salute you for reading this entire rambling missive.

Franksly Yours,

— Jason